Lecture 3: Sampling, Missingness, and Bias

PSTAT100: Data Science - Concepts and Analysis

May 23, 2026

🚁 Overview

Aims of the lecture

- Concepts of sampling and statistical bias:

- Understand the different types of sampling mechanisms.

- Learn how to critically assess data quality based on how it was collected.

- Understand the different types of missingness and how to handle them.

- Learn about the Miller case study and the ethical issues involved.

- Concepts of missing data:

- Understand the different types of missingness.

- Learn how to handle missing data.

- Learn about the Miller case study and the ethical issues involved.

- Case study: voter fraud:

- Steven Miller’s analysis of ‘Voter Integrity Fund’ surveys

- Sources of bias

- Ethical issues

📚 Required Libraries

In this lecture we will be using the following libraries:

Sampling and statistical bias

Sampling Terminology

Here we’ll introduce standard statistical terminology to describe data collection.

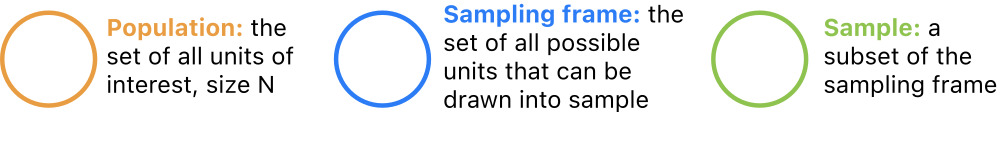

Populations and Samples

- All data are collected somehow.

- A sampling design is a way of selecting observational units for measurement.

- It can be construed as a particular relationship between:

- a population (all entities of interest);

- a sampling frame (all entities that are possible to measure); and

- a sample (a specific collection of entities).

Population

Remember Observational Units

- Last week, we introduced the terminology observational unit to mean a certain (usually physical) entity measured for a study.

- Using this terminology, datasets consist of observations made on observational units.

- All data are data on some kind of thing, such as countries, species, locations, etc.

Populations

A statistical population is the collection of all units of interest. For example:

- All countries (GDP data).

- All mammal species (Allison 1976).

- All babies born in the US (babynames data).

Sampling Frame

Unmeasurable Units

- There are usually some units in a population that can’t be measured due to practical constraints

- Example: many adult U.S. residents don’t have phones or addresses.

Sampling Frame

- For this reason, it is useful to introduce the concept of a sampling frame, which refers to the collection of all units in a population that can be observed for a study.

- Some examples might be:

- All countries reporting economic output between 1961 and 2019.

- All nonendagered mammals that die of natural causes in monitored areas.

- All babies with birth certificates from U.S. hospitals born between 1990 and 2018.

Sample

Sample of Measurable Units

- Finally, it’s rarely feasible to measure every observable unit due to limited data collection resources

- States don’t have the time or money to call every phone number every year.

- A sample is a collection of units in the sampling frame actually selected for study. For instance:

- 234 countries;

- 62 mammal species;

- 13,684,689 babies born in CA;

Sampling

Common Scenarios

We can now imagine a few common sampling scenarios by varying the relationship between population, frame, and sample.

Let’s introduce some notation. Denote an observational unit by \(U_i\), and let:

\[ \begin{alignat*}{2} \mathscr{U} &= \{U_i\}_{i \in I} &&\quad(\text{universe}) \\ P &= \{U_1, \dots, U_N\} &&\quad(\text{population}) \\ F &= \{U_j: j \in J \subset I\} &&\quad(\text{frame})\\ S &\subseteq F &&\quad(\text{sample}) \end{alignat*} \]

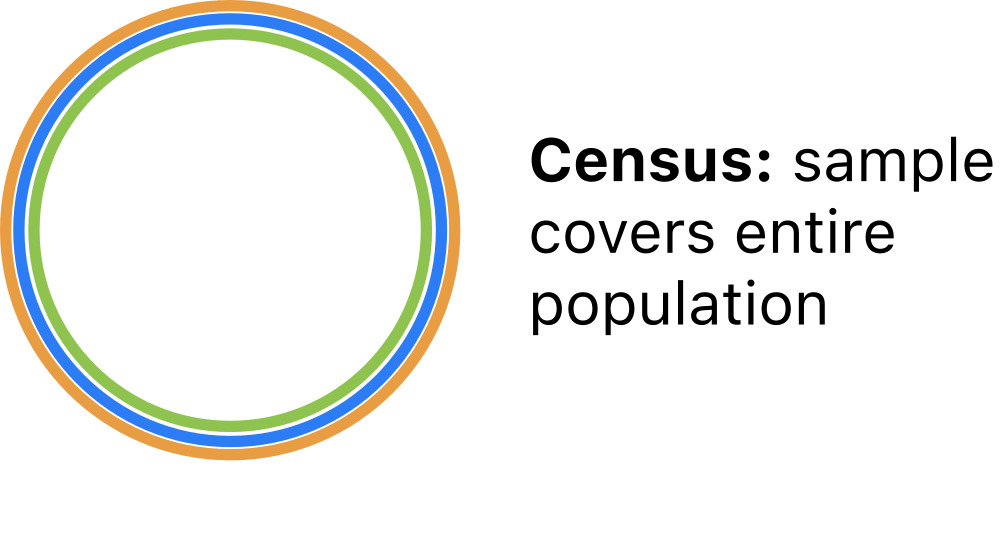

Sampling Scenarios: Population Census

Perhaps the simplest scenario is a population census, where the entire population is observed. In this case:

\[S = F = P\]

Population Census.

From a census, all properties of the population are definitevely known.

- No need to model census data!

Sampling Scenarios: Simple Random Sample

The statistical gold standard is the simple random sample (SRS) in which units are selected at random from the population. In this case:

\[S \subset F = P\]

Simple Random Sample.

From a SRS, sample properties are reflective of population properties.

- Can safely extrapolate from the sample to the population.

Sampling Scenarios: Typical Sample

More common in practice is a SRS from a sampling frame that overlaps with the population but does not cover the population. In this case:

\[S \subset F \quad\text{and}\quad F \cap P \neq \emptyset\]

Typical Sample.

In this scenario, sample properties are reflective of the frame.

- Can extrapolate to a subpopulation, but not the full population.

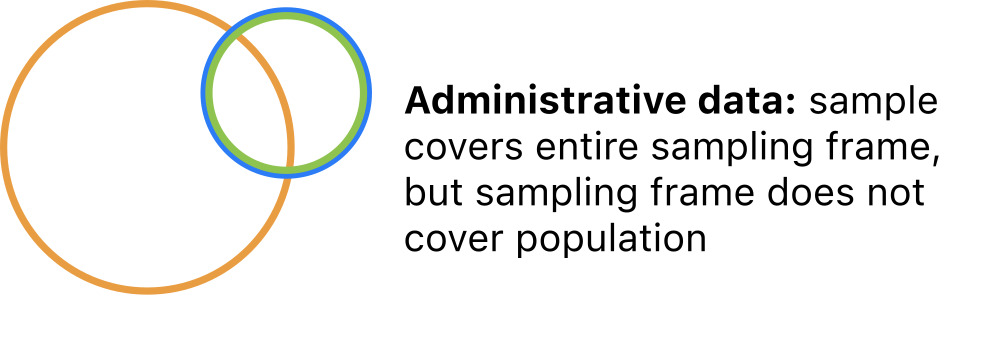

Sampling scenarios: administrative data

Also common is administrative data in which all units are selected from a convenient frame that partly covers the population. In this case:

\[S = F \quad\text{and}\quad F\cap P \neq \emptyset\]

Administrative Data.

Administrative data are singular; they do not represent any broader group.

- No reliable extrapolation is possible.

Extrapolation

Generalizing from Samples

- The relationships among the population, frame, and sample determine the scope of inference.

- The extent to which conclusions based on the sample are generalizable.

- A good sampling design can ensure that the statistical properties of the sample are expected to match those of the population. If so, it is sound to generalize:

- The sample is said to be representative of the population

- And the scope of inference is broad.

A poor sampling design will produce samples that distort the statistical properties of the population. If so, it is not sound to generalize:

- Sample statistics are subjet to bias

- Scope of inference is narrow.

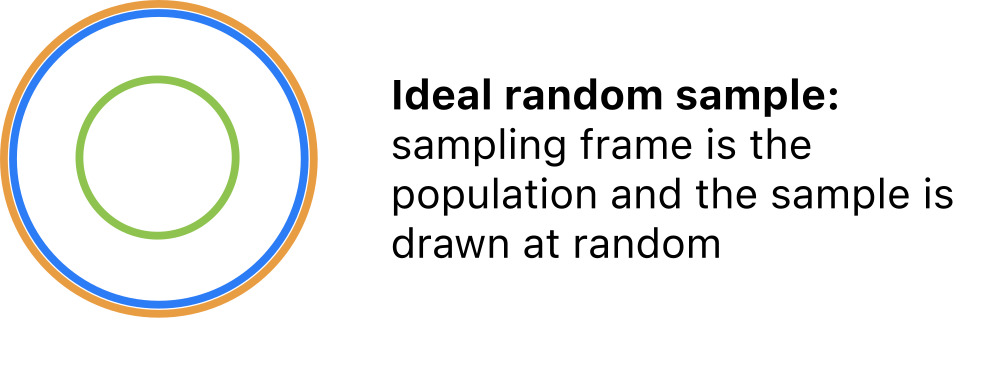

Characterizing Sampling Designs

Design Attributes

The sampling scenarios above can be differentiated along two key attributes:

- The overlap between the sampling frame and the population.

- frame \(=\) population

- frame \(\subset\) population

- frame \(\cap\) population \(\neq \emptyset\)

- The mechanism of obtaining a sample from the sampling frame.

- Census.

- Random sample.

- Probability sample.

- Nonrandom (convenience) sample

If you can articulate these two points, you have fully characterized the sampling design.

Inclusion Probabilities

Some more terminology…

In order to describe sampling mechanisms precisely, we need a little terminology.

For any way of drawing a sample from a frame, each unit has some inclusion probability

- The probability of being included in the sample.

Let’s suppose that the frame \(F\) comprises \(N\) units, and denote the inclusion probabilities by:

\[p_i = P(\text{unit } i \text{ is included in the sample})\]

The inclusion probability of each unit is usually determined by the physical procedure of collecting data, rather than fixed a priori.

Sampling mechanisms

What are Sampling Mechanisms?

Sampling mechanisms are methods of drawing samples and are categorized into four types based on inclusion probabilities.

In a census every unit is included:

- \(p_i = 1\) for every unit \(i = 1, \dots, N\).

In a random sample every unit is equally likely to be included:

- \(p_i \propto \frac{1}{N}\) for every unit \(i = 1, \dots, N\).

In a probability sample units have different inclusion probabilities:

- \(p_i \neq p_j\) for at least one \(i \neq j\).

In a nonrandom sample inclusion probabilities are indeterminate:

- \(p_i = \;?\) for at least one unit \(i\).

Let’s characterize the sampling designs of some example datasets.

GDP Data

GDP Data

Annual observations of GDP growth for 234 countries from 1961 - 2018.

| Country Code | 1961 | 1962 | 1963 | 1964 | 1965 | 1966 | 1967 | 1968 | 1969 | ... | 2010 | 2011 | 2012 | 2013 | 2014 | 2015 | 2016 | 2017 | 2018 | 2019 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Country Name | |||||||||||||||||||||

| Aruba | ABW | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | -3.685029 | 3.446055 | -1.369863 | 4.198232 | 0.300000 | 5.700001 | 2.100000 | 1.999999 | NaN | NaN |

| Afghanistan | AFG | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | 14.362441 | 0.426355 | 12.752287 | 5.600745 | 2.724543 | 1.451315 | 2.260314 | 2.647003 | 1.189228 | 3.911603 |

| Angola | AGO | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | 4.403933 | 3.471976 | 8.542188 | 4.954545 | 4.822628 | 0.943572 | -2.580050 | -0.147213 | -2.003630 | -0.624644 |

| Albania | ALB | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | 3.706892 | 2.545322 | 1.417526 | 1.001987 | 1.774487 | 2.218752 | 3.314805 | 3.802197 | 4.071301 | 2.240070 |

| Andorra | AND | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | -1.974958 | -0.008070 | -4.974444 | -3.547597 | 2.504466 | 1.434140 | 3.709678 | 0.346072 | 1.588765 | 1.849238 |

5 rows × 60 columns

- Population: all countries existing between 1961-2019.

- Frame: all countries reporting economic output for some year between 1961 and 2019.

- Sample: equal to frame.

GDP Data Scope

GDP Data

| Country Code | 1961 | 1962 | 1963 | 1964 | 1965 | 1966 | 1967 | 1968 | 1969 | ... | 2010 | 2011 | 2012 | 2013 | 2014 | 2015 | 2016 | 2017 | 2018 | 2019 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Country Name | |||||||||||||||||||||

| Aruba | ABW | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | -3.685029 | 3.446055 | -1.369863 | 4.198232 | 0.300000 | 5.700001 | 2.100000 | 1.999999 | NaN | NaN |

| Afghanistan | AFG | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | 14.362441 | 0.426355 | 12.752287 | 5.600745 | 2.724543 | 1.451315 | 2.260314 | 2.647003 | 1.189228 | 3.911603 |

| Angola | AGO | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | 4.403933 | 3.471976 | 8.542188 | 4.954545 | 4.822628 | 0.943572 | -2.580050 | -0.147213 | -2.003630 | -0.624644 |

| Albania | ALB | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | 3.706892 | 2.545322 | 1.417526 | 1.001987 | 1.774487 | 2.218752 | 3.314805 | 3.802197 | 4.071301 | 2.240070 |

| Andorra | AND | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | -1.974958 | -0.008070 | -4.974444 | -3.547597 | 2.504466 | 1.434140 | 3.709678 | 0.346072 | 1.588765 | 1.849238 |

5 rows × 60 columns

- Overlap: frame partly overlaps population.

- Mechanism: sample is a census of the frame.

This is administrative data. Scope of inference: none.

Mammal Data

Mammal data

Observations of average brain and body weights for 62 mammal species.

| species | body_weight | brain_weight | slow_wave | paradox | |

|---|---|---|---|---|---|

| 0 | African elephant | 6654.000 | 5712.0 | NaN | NaN |

| 1 | African giant pouched rat | 1.000 | 6.6 | 6.3 | 2.0 |

| 2 | Arctic fox | 3.385 | 44.5 | NaN | NaN |

| 3 | Arctic ground squirrel | 0.920 | 5.7 | NaN | NaN |

| 4 | Asian elephant | 2547.000 | 4603.0 | 2.1 | 1.8 |

- Population: all mammals.

- Frame: all mammal species that die of natural causes in monitorerd areas.

- Sample: individuals from 62 species sampled opportunistically.

Mammal Data Scope

Mammal Data

| species | body_weight | brain_weight | slow_wave | paradox | |

|---|---|---|---|---|---|

| 0 | African elephant | 6654.000 | 5712.0 | NaN | NaN |

| 1 | African giant pouched rat | 1.000 | 6.6 | 6.3 | 2.0 |

| 2 | Arctic fox | 3.385 | 44.5 | NaN | NaN |

| 3 | Arctic ground squirrel | 0.920 | 5.7 | NaN | NaN |

| 4 | Asian elephant | 2547.000 | 4603.0 | 2.1 | 1.8 |

- Overlap: frame is a subset of population.

- Mechanism: convenience sampling.

Let’s call this convenience data. Scope of inference: none.

Baby Names Data

Baby Names Data

Records of given names of babies in CA from 1990 - 2018.

| State | Sex | Year | Name | Count | |

|---|---|---|---|---|---|

| 0 | CA | F | 1990 | Jessica | 6635 |

| 1 | CA | F | 1990 | Ashley | 4537 |

| 2 | CA | F | 1990 | Stephanie | 4001 |

| 3 | CA | F | 1990 | Amanda | 3856 |

| 4 | CA | F | 1990 | Jennifer | 3611 |

- Population: all babies born in CA between 1990 and 2018.

- Frame: all babies born in CA between 1990 and 2018.

- Sample: equal to frame.

Baby Names Data

Baby Names Data

Records of given names of babies in CA from 1990 - 2018.

| State | Sex | Year | Name | Count | |

|---|---|---|---|---|---|

| 0 | CA | F | 1990 | Jessica | 6635 |

| 1 | CA | F | 1990 | Ashley | 4537 |

| 2 | CA | F | 1990 | Stephanie | 4001 |

| 3 | CA | F | 1990 | Amanda | 3856 |

| 4 | CA | F | 1990 | Jennifer | 3611 |

- Overlap:

- Mechanism:

This is __________________ data. Scope of inference: ______.

Statistical Bias

Statistical bias

What is bias?

- Statistical bias is the average difference between a sample property and a population property across all possible samples under a particular sampling design.

- In short, arises from systematic over- or under-representation of units.

Data quality

Making Judgements

- It’s easy to fall into value judgements about a dataset based on scope of inference and bias.

- But there’s nothing inherently better or worse about the scenarios we’ve considered.

- Our goal in all this is to create a framework for understanding the limitations of a given dataset so that we can make pragmatic choices about how to use it that align with the information it has to offer.

It may help to map the scenarios we’ve considered onto an ‘informativeness’ spectrum:

Missing Data

The Missing Data Problem

Missingness

Missing data arise when one or more variable measurements fail for a subset of observations.

- Missingness modulates sampling design.

- Types of missingness: MCAR, MAR, and MNAR.

- Common in pratice due to, for instance:

- Equipment failure.

- Sample contamination or loss.

- Respondents leaving questions blank.

- Attrition of study participants (dropping out).

- Missingness gets a bad rap.

- It is often derided, downplayed, minimized, and overlooked.

- There is publication bias against studies with lots of missing data.

- Many researchers and data scientists ignore it by simply deleting affected observations.

- But it’s a reality in most datasets, and deserves attention.

Missing representations

NA, NaN, NULL, None, etc.

It is standard practice to record observations with missingness but enter a special symbol (

..,-,NA, etcetera) for missing values.In python, missing values are mapped to a special float:

NaNPandas has the ability to map specified entries to

NaNwhen parsing data files.

Missing Data Example

Here is some made-up data with two missing values:

Missing representations

Pandas has the ability to map specified entries to NaN when parsing data files:

Calculations with NaNs

NaNs halt calculations on numpy arrays.

However, the default behavior in pandas is to ignore the NaN’s, which allows the computation to proceed:

But here’s the rub: those missing values could have been anything, and ignoring them changes the result from what it would have been!

The missing data problem

In a nutshell, the missing data problem is:

How should missing values be handled in a data analysis?

Getting the software to run is one thing, but this alone does not address the challenges posed by the missing data. Unless the analyst, or the software vendor, provides some way to work around the missing values, the analysis cannot continue because calculations on missing values are not possible. There are many approaches to circumvent this problem. Each of these affects the end result in a different way. (Stef van Buuren, 2018)

- There’s no universal approach to the missing data problem. The choice of method depends on:

- The analysis objective;

- The missing data mechanism.

Missing data in PSTAT100

Covering the Basics

- We will briefly cover the topic of missing data in this course.

- Specifically we will look into:

- How to inspect for missing data.

- Some simple options for handling missing data.

- Characterizing types of missingness (missing data mechanisms).

- Understanding missingness as a potential source of bias.

- Basic do’s and don’t’s when it comes to missingness.

Further Reading

If you are interested in the topic, Stef van Buuren’s Flexible Imputation of Missing Data (the source of one of your readings this week) provides an excellent introduction.

Missing data mechanisms

Standard Framework

- The standard framework for understanding missingness is much like that for understanding sampling

- Just as every unit has a probability of being selected in a sample, every observation has some probablitiy of going missing.

A missing data mechanism is a process causing missingness.

Suppose we have a dataset \(\mathbf{X}\) (tidy) consisting of \(n\) rows/observations and \(p\) columns/variables, and define:

\[q_{ij} = P(x_{ij} \text{ is missing})\]

- Missing data mechanisms are classified into three categories based on these probabilities:

- Missing completely at random (MCAR)

- Missing at random (MAR)

- Missing not at random (MNAR)

Missing Completely at Random (MCAR)

MCAR Definition

Data are missing completely at random (MCAR) if the probabilities of missing entries are uniformly equal.

\[q_{ij} = q \quad\text{for all}\quad i, j\]

This implies that the cause of missingness is unrelated to the data: missing values can be ignored.

This is the easiest scenario to handle.*

Missing at Random (MAR)

MAR Definition

Data are missing at random (MAR) if the probabilities of missing entries depend on observed data.

\[q_{ij} = f(\mathbf{x}_i)\]

This implies that information about the cause of missingness is captured within the dataset: it is possible to model the missing data.

Missing data methods typically address this scenario.

Missing Not at Random (MNAR)

MNAR Definition

Data are missing not at random (MNAR) if the probabilities of missing entries depend on unobserved data.

\[q_{ij} = \; ?\]

- This implies that information about the cause of missingness is unavailable.

- This is the most complicated scenario.

GDP Missingness Example

In the GDP growth data, growth measurements are missing for many countries before a certain year.

| Country Code | 1961 | 1962 | 1963 | 1964 | 1965 | 1966 | 1967 | 1968 | 1969 | ... | 2010 | 2011 | 2012 | 2013 | 2014 | 2015 | 2016 | 2017 | 2018 | 2019 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Country Name | |||||||||||||||||||||

| Aruba | ABW | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | -3.685029 | 3.446055 | -1.369863 | 4.198232 | 0.300000 | 5.700001 | 2.100000 | 1.999999 | NaN | NaN |

| Afghanistan | AFG | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | 14.362441 | 0.426355 | 12.752287 | 5.600745 | 2.724543 | 1.451315 | 2.260314 | 2.647003 | 1.189228 | 3.911603 |

| Angola | AGO | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | 4.403933 | 3.471976 | 8.542188 | 4.954545 | 4.822628 | 0.943572 | -2.580050 | -0.147213 | -2.003630 | -0.624644 |

| Albania | ALB | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | 3.706892 | 2.545322 | 1.417526 | 1.001987 | 1.774487 | 2.218752 | 3.314805 | 3.802197 | 4.071301 | 2.240070 |

| Andorra | AND | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | ... | -1.974958 | -0.008070 | -4.974444 | -3.547597 | 2.504466 | 1.434140 | 3.709678 | 0.346072 | 1.588765 | 1.849238 |

5 rows × 60 columns

We might be able to hypothesize about why – perhaps a country didn’t exist or didn’t keep reliable records for a period of time.

However, the data as they are contain no additional information that might explain the cause of missingness. So these data are MNAR.

Missing Data Fixes - Dropping

Dropping Missing Data

The easiest approach to missing data is to drop observations with missing values: df.dropna().

| Country Code | 1961 | 1962 | 1963 | 1964 | 1965 | 1966 | 1967 | 1968 | 1969 | ... | 2010 | 2011 | 2012 | 2013 | 2014 | 2015 | 2016 | 2017 | 2018 | 2019 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Country Name | |||||||||||||||||||||

| Argentina | ARG | 5.427843 | -0.852022 | -5.308197 | 10.130298 | 10.569433 | -0.659726 | 3.191997 | 4.822501 | 9.679526 | ... | 10.125398 | 6.003952 | -1.026420 | 2.405324 | -2.512615 | 2.731160 | -2.080328 | 2.818503 | -2.565352 | -2.088015 |

| Australia | AUS | 2.485769 | 1.296087 | 6.214630 | 6.978522 | 5.983506 | 2.382458 | 6.302620 | 5.095814 | 7.044329 | ... | 2.067417 | 2.462756 | 3.918163 | 2.584898 | 2.533115 | 2.192647 | 2.770652 | 2.300611 | 2.949286 | 2.160956 |

| Austria | AUT | 5.537979 | 2.648675 | 4.138268 | 6.124354 | 3.480175 | 5.642861 | 3.008048 | 4.472313 | 6.275867 | ... | 1.837094 | 2.922797 | 0.680446 | 0.025505 | 0.661273 | 1.014502 | 1.989437 | 2.399588 | 2.580121 | 1.418734 |

| Burundi | BDI | -13.746135 | 9.063158 | 4.135407 | 6.273038 | 3.967226 | 4.612993 | 13.821519 | -0.297884 | -1.459541 | ... | 5.124163 | 4.032602 | 4.446708 | 4.924195 | 4.240652 | -3.900003 | -0.600020 | 0.500010 | 1.609933 | 1.842477 |

| Belgium | BEL | 4.978423 | 5.212004 | 4.351584 | 6.956685 | 3.560660 | 3.155895 | 3.868147 | 4.194130 | 6.629800 | ... | 2.864293 | 1.694514 | 0.739217 | 0.459242 | 1.578533 | 2.041459 | 1.266686 | 1.608087 | 1.812296 | 1.743820 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| St. Vincent and the Grenadines | VCT | 4.527283 | 3.694262 | -6.265339 | 3.669726 | 0.884935 | 0.000000 | -9.523813 | 6.509706 | 2.860845 | ... | -3.353437 | -0.419296 | 1.382087 | 1.832977 | 1.214039 | 1.330275 | 1.897441 | 1.000358 | 2.163129 | 0.494924 |

| World | WLD | 4.299183 | 5.554137 | 5.350678 | 6.713557 | 5.519644 | 5.768498 | 4.485951 | 6.313528 | 6.113628 | ... | 4.303017 | 3.137774 | 2.518507 | 2.665979 | 2.861098 | 2.873949 | 2.605790 | 3.298628 | 2.976776 | 2.343378 |

| South Africa | ZAF | 3.844751 | 6.177883 | 7.373613 | 7.939782 | 6.122761 | 4.438308 | 7.196576 | 4.153445 | 4.715831 | ... | 3.039731 | 3.284168 | 2.213355 | 2.485200 | 1.846992 | 1.193733 | 0.399088 | 1.414513 | 0.787056 | 0.152583 |

| Zambia | ZMB | 1.361382 | -2.490839 | 3.272393 | 12.214048 | 16.647456 | -5.570310 | 7.919697 | 1.248330 | -0.436916 | ... | 10.298223 | 5.564602 | 7.597593 | 5.057232 | 4.697992 | 2.920375 | 3.776679 | 3.504336 | 4.034378 | 1.441785 |

| Zimbabwe | ZWE | 6.316157 | 1.434471 | 6.244345 | -1.106172 | 4.910571 | 1.523130 | 8.367009 | 1.970135 | 12.428236 | ... | 19.675323 | 14.193913 | 16.665429 | 1.989493 | 2.376929 | 1.779873 | 0.755869 | 4.704035 | 4.829674 | -8.100000 |

119 rows × 60 columns

- Induces information loss, but is otherwise appropriate if data are MCAR.

- Induces bias if data are MAR or MNAR.

Missing Data Fixes - Mean Imputation

Perils of Mean Imputation

Imputation is the process of replacing missing values with estimated values, typically statistical estimates.

Mean Imputation

- Imputing too many missing values distorts the distribution of sample values.*

Do’s and don’t’s

Do:

- Always check for missing values upon import.

- Tabulate the proportion of observations with missingness

- Tabulate the proportion of values for each variable that are missing

- Take time to find out the reasons data are missing.

- Determine which outcomes are coded as missing.

- Investigate the physical mechanisms involved.

- Report missing data if they are present.

- Always check for missing values upon import.

Don’t:

- Use software defaults for handling missing values blindly.

- Drop missing values if data are not MCAR.

Case Study: Voter Fraud

Miller Case

What is the Miller case?

- On November 21, 2020, a professor at Williams College, Steven Miller, filed an affidavit alleging that an analysis of phone surveys showed that among registered republican voters in PA:

- ~40K mail ballots were fraudlently requested;

- ~48K mail ballots were not counted.

President Donald J. Trump amplified the statement in a tweet, the Chairman of the Federal Elections Commission (FEC) referenced the statement as indicative of fraud, and a conservative group prominently featured it in a legal brief seeking to overturn the Pennsylvania election results. (Samuel Wolf, Williams Record, 11/25/20)

The Miller affidavit was criticized by statisticians as incorrect, irresponsible, and unethical.

The flawed assumption

On a purely mathematical level, Miller’s calculations were standard. The key issue was a single flawed assumption:

The analysis is predicated on the assumption that the responders are a representative sample of the population of registered Republicans in Pennsylvania for whom a mail-in ballot was requested but not counted, and responded accurately to the questions during the phone calls. (Miller affidavit)

Essentially, Miller made two critical mistakes in the analysis:

- Failure to critically assess the sampling design and scope of inference.

- Ignored missing data.

We will conduct a post mortem and examine these issues.

Miller is a number theorist, not a trained survey statistician, so on some level his mistakes were understandable, but they did a lot of damage.

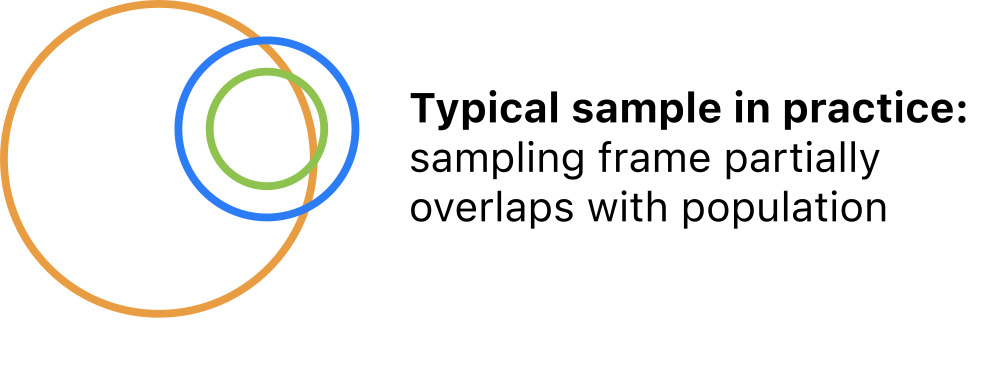

Miller Case - Sampling design

There were 165,412 unreturned mail ballots requested by registered republicans in PA.

Those voters were surveyed by phone by Matt Braynard’s private firm External Affairs on behalf of the Voter Integrity Fund.

We don’t really know how they obtained and selected phone numbers or exactly what the survey procedure was, but here’s what we do know:

- ~23K individuals were called on Nov. 9-10.

- The ~2.5K who answered were asked if they were the registered voter or a family member.

- If they said yes, they were asked if they requested a ballot.

- Those who requested a ballot were asked if they mailed it.

Miller Case - Spot any immediate issues?

Let’s look in more detail

- ~23K individuals were called on Nov. 9-10

- How did they pick who to call?

- Narrow snapshot in time.

- 9th and 10th were a Monday and Tuesday.

- Mail ballots were still being counted; don’t actually know whether returned ballots were ultimately counted or not by this time.

- The ~2.5K who answered were asked if they were the registered voter or a family member.

- Family members could answer on behalf of one another.

- If they said yes, they were asked if they requested a ballot.

- Misleading question: there’s a registration checkbox; you don’t have to file an explicit request in Pennsylvania.

- Those who requested a ballot were asked if they mailed it.

- What about voters who claimed not to request a ballot? Did they receive one, and if so, did they mail it?

Miller Case - Survey schematic

Miller Survey Schematic

Miller Case - Sampling design

Problematic Sampling Design

Population: republicans registered to vote in PA who had mail ballots officially requested that hadn’t been returned or counted by November 9?

Sampling frame: unknown; source of phone numbers unspecified.

Sample: 2684 registered republicans or family members of registered repbulicans who had a mail ballot officially requested in PA and answered survey calls on Nov. 9 or 10.

Sampling mechanism: nonrandom; depends on availability during calling hours on Monday and Tuesday, language spoken, and willingness to talk.

This is not a representative sample of any meaningful population.*

Miller Case - Missingness

What is missing?

Respondents hung up at every stage of the survey.

This is probably not at random – individuals who do not believe voter fraud occurred are more likely to hang up.

However, we don’t have any information about whether respondents think fraud occurred.

So data are MNAR, and likely over-represent people more likely to claim they never requested a ballot.

Miller Case - The Analysis

Calculations

- The proportion of respondents who reported not requesting ballots among those who either voted in person, didn’t request a ballot, or did request a ballot.

- Ignored those who weren’t sure and those who hung up.

- Claimed that the estimated number of fraudulent requests was: \[\left(\frac{556}{1150 + 556 + 544}\right)\times 165,412 = 0.2471 \times 165,412 = 40,875\]

Miller Case - Simulation

How much bias might there be?

It’s not too tricky to envision sources of bias that would affect Miller’s results. How much bias might there be?

This is an oversimplification, but if we are willing to assume that

- Respondents all know whether they actually requested a ballot and tell the truth.

- Respondents who didn’t request a ballot are more likely to be reached.

- Respondents who did request a ballot are more likely to hang up during the interview.

Then we can show through a simple simulation that an actual fraud rate of under 1% will be estimated at over 20% almost all the time.

Miller Case - Simulated population

First let’s generate a population of 150K voters.

Miller Case - Simulated Sample

Introducing sampling weights

Then let’s introduce sampling weights based on the conditional probability that an individual will talk with the interviewer given whether they requested a ballot or not.

# assume respondents tell the truth

p_request = 1 - true_prop

p_nrequest = true_prop

# assume respondents who claim no request are 15x more likely to talk

talk_factor = 15

# observed nonresponse rate

p_talk = 0.09

# conditional probability of talking given claimed request or not

p_talk_request = p_talk/(p_request + talk_factor*p_nrequest)

p_talk_nrequest = talk_factor*p_talk_request

# draw sample weighted by conditional probabilities

np.random.seed(41021)

population.loc[population.requested == 1, 'sample_weight'] = p_talk_request

population.loc[population.requested == 0, 'sample_weight'] = p_talk_nrequest

samp = population.sample(n = 2500, replace = False, weights = 'sample_weight')Miller Case - Simulated Missing Mechanism

Introducing missing values

Then let’s introduce missing values at different rates for respondents who requested a ballot and respondents who didn’t.

# assume respondents who affirm requesting are 4x more likely to hang up or deflect

missing_factor = 4

# observed missing/unsure rate

p_missing = 0.25

# conditional probabilities of missing given request status

p_missing_nrequest = p_missing/(0.8 + missing_factor*0.2)

p_missing_request = missing_factor*p_missing_nrequest

# input missing values

np.random.seed(41021)

samp.loc[samp.requested == 1, 'missing_weight'] = p_missing_request

samp.loc[samp.requested == 0, 'missing_weight'] = p_missing_nrequest

samp['missing'] = np.random.binomial(n = 1, p = samp.missing_weight.values)

samp.loc[samp.missing == 1, 'requested'] = float('nan')Miller Case - Simulated Result

If we then drop all the missing values and calculate the proportion of respondents who didn’t request a ballot, we get:

So Miller’s result is expected if the sampling and missing mechanisms introduce bias, even if the true rate of fraudulent requests is under 1% – on the order of 1,000 ballots.

Miller Case - Takeaways

Main mistakes

- The main mistakes were ignoring the sampling design and missing data.

- In other words, proceeding to analyze the data without first getting well-acquainted.

- We should assume these were honest mistakes.

After the affidavit was filed, a colleague spoke with Miller; he recanted and acknowledged his mistakes, but this received far less attention than the conclusions in the affidavit.

Professional ethics and social responsibility

The American Statistical Association publishes ethical guidelines for statistical practice. The Miller case violated a large number of these, most prominently, that an ethical practitioner:

Reports the sources and assessed adequacy of the data, accounts for all data considered in a study, and explains the sample(s) actually used.

In publications and reports, conveys the findings in ways that are both honest and meaningful to the user/reader. This includes tables, models, and graphics.

In publications or testimony, identifies the ultimate financial sponsor of the study, the stated purpose, and the intended use of the study results.

When reporting analyses of volunteer data or other data that may not be representative of a defined population, includes appropriate disclaimers and, if used, appropriate weighting.

Conclusion

✅ What we covered

- Sampling design.

- Terminology.

- Sampling mechanisms.

- Missing data mechanisms.

- Statistical

- Case study in problematic design - Miller case.

📅 What’s next?

- Basic Data Preparation in

python.- Missing data.

- Duplicates.

- Inconsistent data types.

- Mixed data formats.

- Invalid values.

- Labelling issues.

- Parsing dates.